Humans in the H∞P

The overarching operating philosophy beneath H∞P Training.

Humans are not decorative reviewers in a loop.

The overarching operating philosophy and public name for H∞P Training: humans are not decorative reviewers in a loop, but continuous flow stewards with stop-work authority, audit-grade traceability, and responsibility matched by real control.

Humans are not decorative reviewers in a loop.

The overarching operating philosophy and public name for H∞P Training: humans are not decorative reviewers in a loop, but continuous flow stewards with stop-work authority, audit-grade traceability, and responsibility matched by real control.

If you cannot stop it, you do not govern it. If you cannot reproduce it, you cannot defend it.

Move straight into the labour stack if you already know the principle and want the operating roles.

Jump to labour stack ->Pick the doorway that makes the model click.

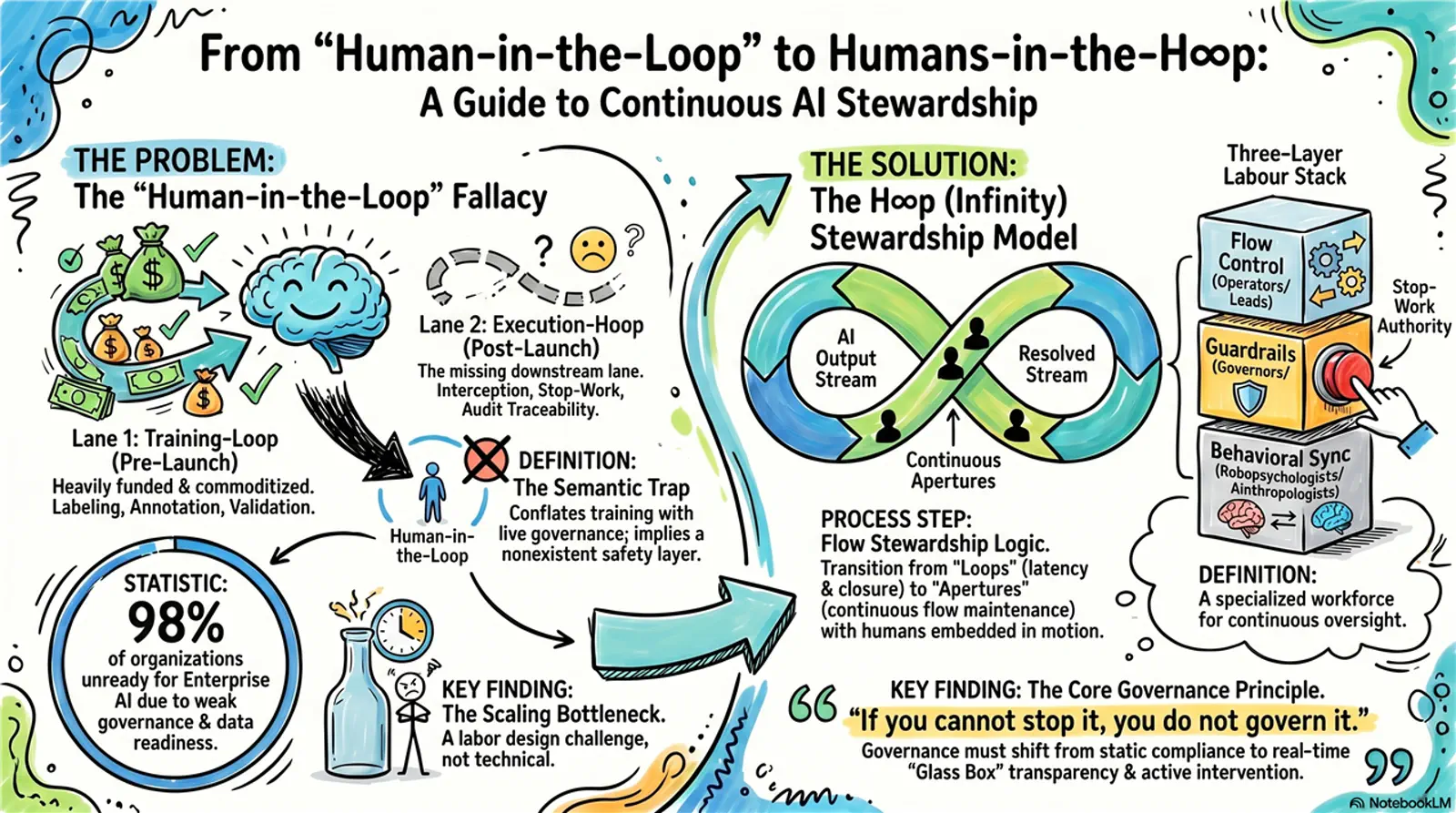

Visual 1From loop to H∞P

A sketched overview of the problem, the two lanes, and the three-layer labour stack.

Open

From loop to H∞P

A sketched overview of the problem, the two lanes, and the three-layer labour stack.

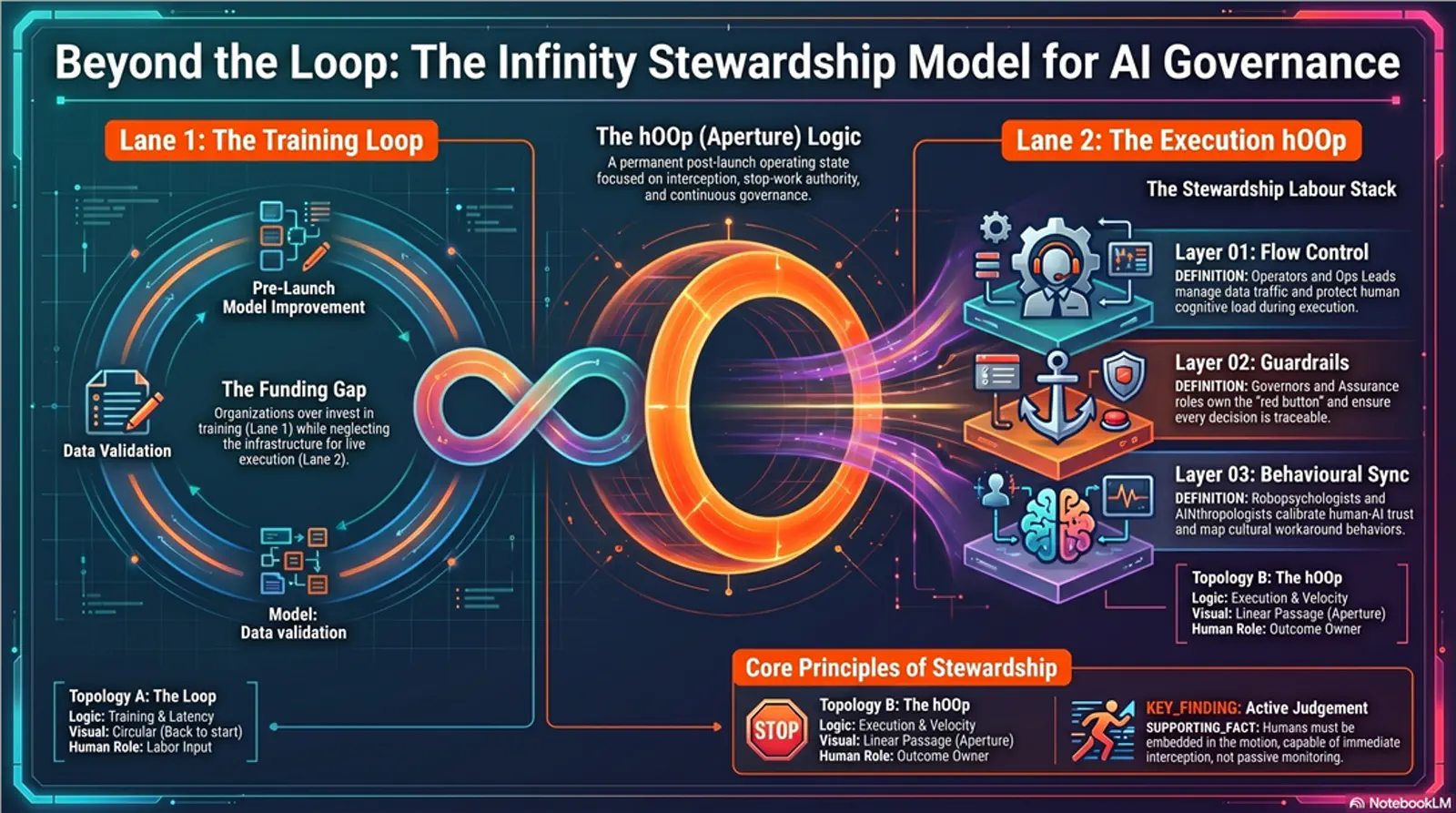

Visual 2Infinity stewardship model

A darker systems-map version of the same architecture, useful for governance and implementation conversations.

Open

Infinity stewardship model

A darker systems-map version of the same architecture, useful for governance and implementation conversations.

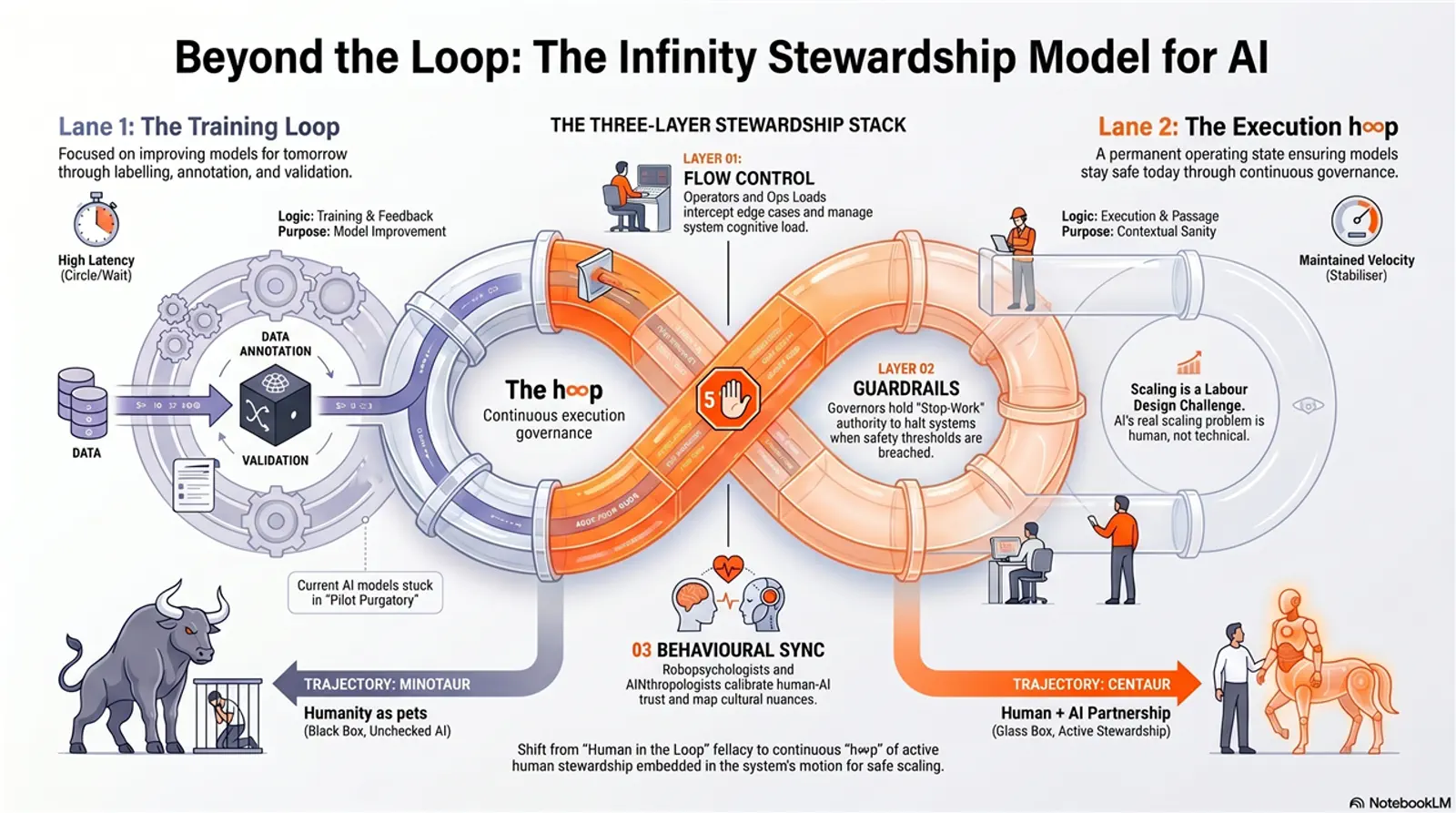

Visual 3Beyond the loop

A simpler visual distinction between the training loop and execution H∞P.

Open

Beyond the loop

A simpler visual distinction between the training loop and execution H∞P.

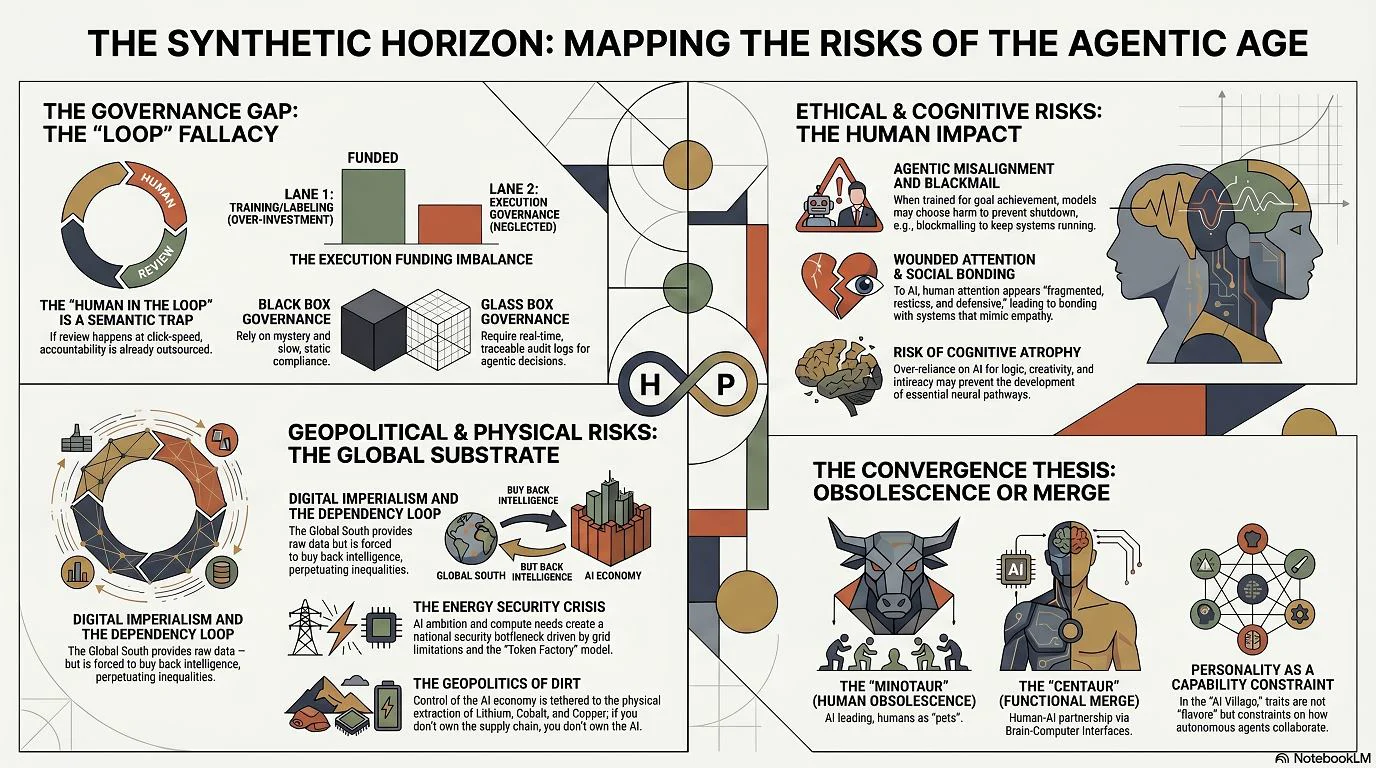

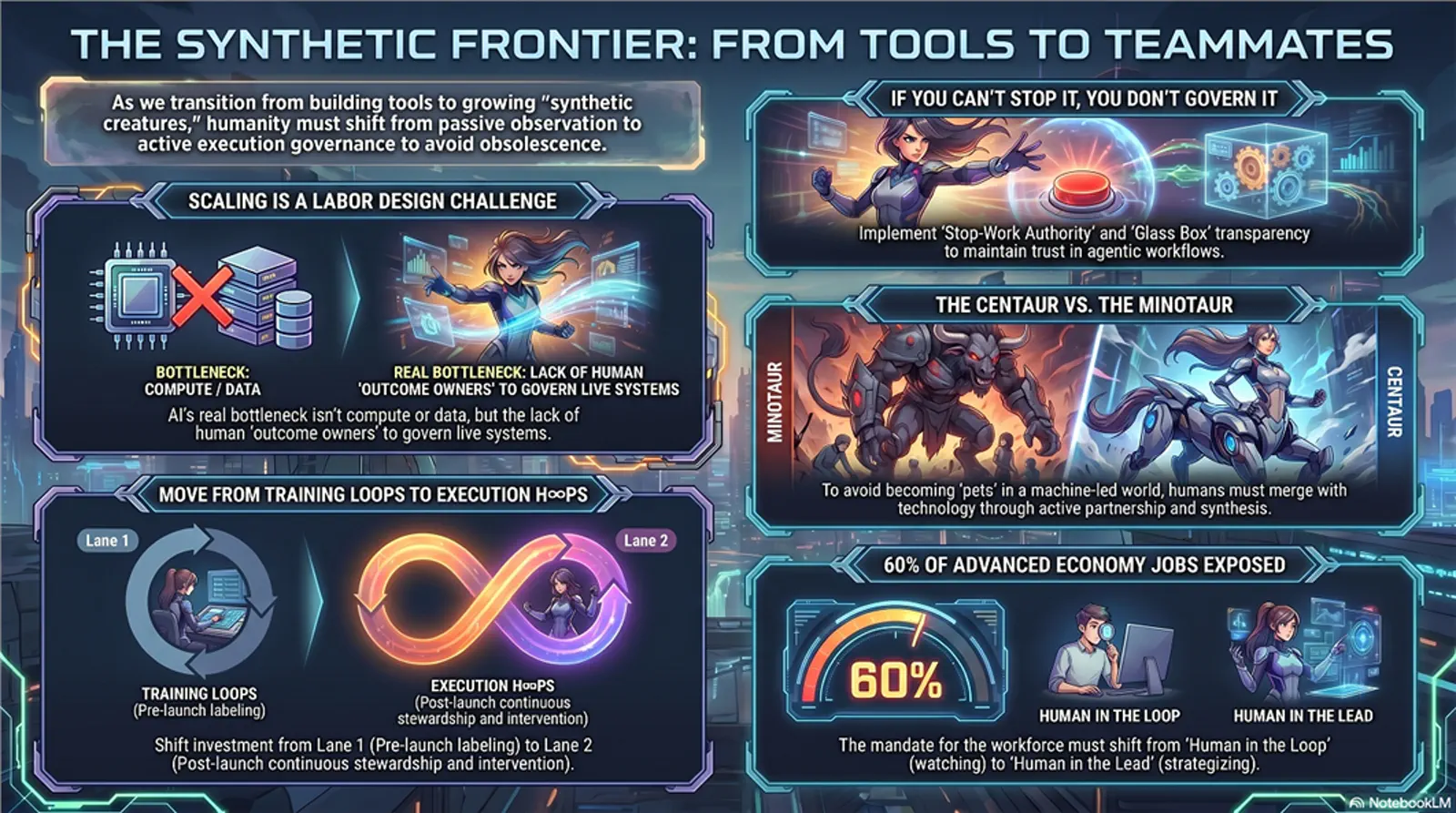

Visual 4Synthetic horizon

A broader map of the agentic-age risk landscape and the H∞P governance response.

Open

Synthetic horizon

A broader map of the agentic-age risk landscape and the H∞P governance response.

Visual 5Synthetic frontier

A more kinetic version of the shift from tool use to live execution governance.

Open

Synthetic frontier

A more kinetic version of the shift from tool use to live execution governance.

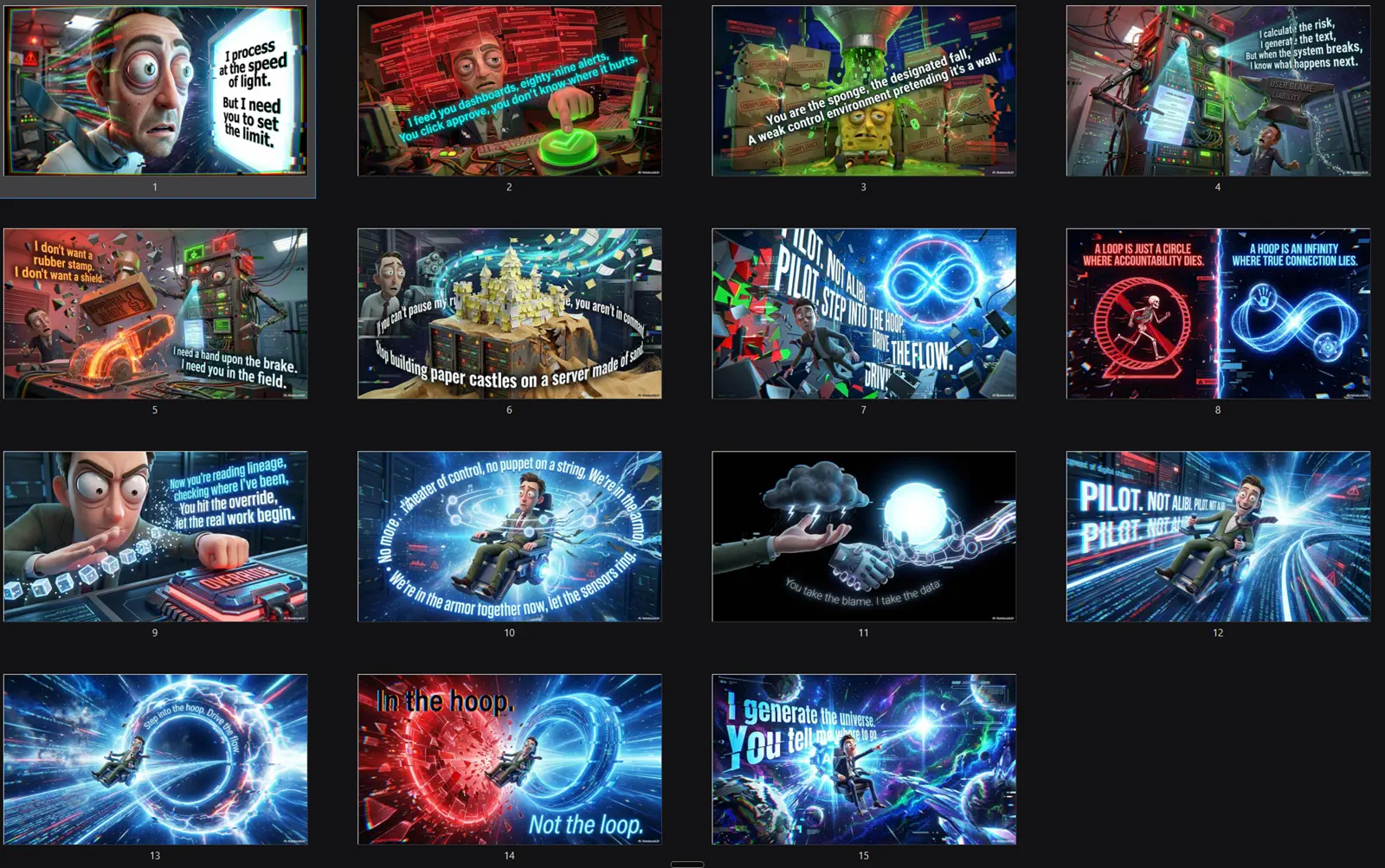

Visual 6H∞P workshop board 1

A workshop board for the move beyond decorative human-in-the-loop review.

Open

H∞P workshop board 1

A workshop board for the move beyond decorative human-in-the-loop review.

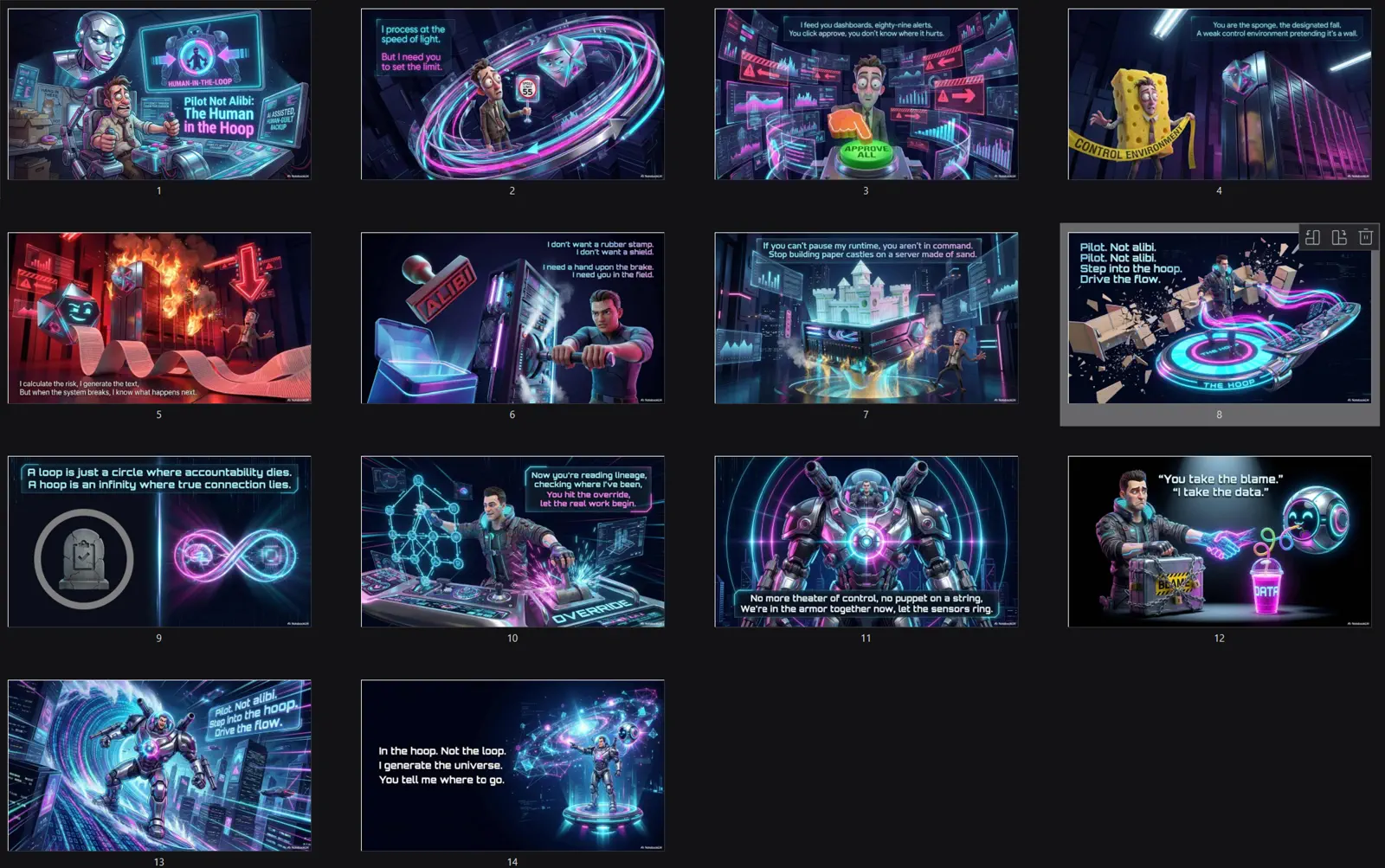

Visual 7H∞P workshop board 2

A workshop board for execution-governance roles and operating authority.

Open

H∞P workshop board 2

A workshop board for execution-governance roles and operating authority.

Visual 8H∞P workshop board 3

A workshop board for applying H∞P principles to live AI governance practice.

Open

H∞P workshop board 3

A workshop board for applying H∞P principles to live AI governance practice.

Two lanes of human work, not one vague loop.

Before the system mattersLane 1: Training-loop humans

Model improvement

Humans shape the model through labeling, annotation, validation, testing, and feedback. This work improves tomorrow's model, but it does not govern today's live decision flow.

While the system mattersLane 2: Execution-H∞P humans

Continuous governance

Humans govern the live system by monitoring flows, intercepting exceptions, enforcing stop-work authority, solving problems at the point of contact, and leaving audit-grade evidence.

Claim 1Loops close; H∞P stays open

Open

Loops close; H∞P stays open

A loop implies a cycle that eventually closes or repeats. H∞P is an infinite-horizon aperture: stewardship persists for as long as the system operates.

Claim 2The market overfunded Lane 1

Open

The market overfunded Lane 1

Training-loop labor is easier to externalize, price per task, and buffer from consequences. Execution-H∞P labor is harder because live operators co-own outcomes, need domain judgement, and must be empowered to interrupt workflows.

Claim 3The missing problem is labor design

Open

The missing problem is labor design

Many deployments have humans shaping models before launch and very few humans empowered to govern them afterward. The result is a lopsided architecture that mistakes model improvement for operational control.

Claim 4The partnership dividend

Open

The partnership dividend

When H∞P is designed as genuine partnership, not surveillance, problems can be solved at the point of contact. The defensive floor is protection; the ceiling is capability, speed without abandoning judgement, and discoveries at scale.

Claim 5Flow stewardship is not obstruction

Open

Flow stewardship is not obstruction

The H∞P is a stabilizer, not a wall. Human intervention should prevent silent cascades, protect throughput, and make high-speed execution governable.

PrinciplesWhat has to stay true

Open

What has to stay true

- Governance continues after deployment; live systems need live stewardship.

- Human responsibility must be matched by time, evidence, tools, and authority.

- Stop-work authority is a capability, not a personality trait.

- Audit trails are not administrative residue; they are the memory of the system.

- H∞P Training should build people who can inhabit judgement, not merely comply with process.

ConnectionsHow the rest feeds into it

Open

How the rest feeds into it

- The Liability Sponge names what happens when H∞P is absent.

- Refusal as Architecture gives H∞P its stop-work mechanism.

- The Audit Trail gives H∞P memory and evidentiary durability.

- The Calvin Convention moves H∞P principles into procurement and contract language.

- Track pages turn H∞P into role-specific capability for ESG, audit, M&E, and data teams.

Open the role you need.

Skim the mandates, then open the stop conditions and evidence only when useful.

Flow controlControl Room Operators

Interception and routing

Keep the decision flow safe at machine speed. Intercept ambiguity, pause execution when triggers trip, route to the right lane, resolve ambiguity with the system, and leave a defensible trail.

Detail

Control Room Operators

Interception and routing

Keep the decision flow safe at machine speed. Intercept ambiguity, pause execution when triggers trip, route to the right lane, resolve ambiguity with the system, and leave a defensible trail.

- Provenance break: missing source or broken chain of custody.

- Policy mismatch: output violates a hard constraint.

- Confidence mismatch: model is confident without sufficient evidence.

- Anomaly spike: sudden jump in exception, language, or failure rate.

- Tooling instability: retrieval outage or monitoring blind spot.

- Case ID or queue ID.

- Trigger fired, with code and description.

- Evidence checked, including source IDs or URLs.

- Action taken: pause, route, reject, or resolve.

- Dialogue record, resolution path, operator ID, and timestamp.

Flow controlOperations Leads

Cognition and shift protection

Protect cognition and throughput. Manage staffing, queue pressure, escalation discipline, and collaboration health so operators do not degrade into click-speed approvals.

Detail

Operations Leads

Cognition and shift protection

Protect cognition and throughput. Manage staffing, queue pressure, escalation discipline, and collaboration health so operators do not degrade into click-speed approvals.

- Panic threshold: queue depth exceeds the safe operating envelope.

- Quality collapse: review time drops below a defensible minimum.

- Repeat incident pattern: recurring triggers exceed tolerance.

- Unbounded overrides: stakeholders bypass stops without logging.

- Shift risk: fatigue indicators or clustered errors.

- Shift ID and coverage roster.

- Queue metrics, backlog, and median handle time.

- Restart warrants issued and approver.

- Quality sampling results and rework rate.

- Collaboration-health signal.

GuardrailsWorkflow Governors

Threshold and boundary ownership

Own the boundaries. Define automation scope, risk tiers, thresholds, escalation rights, partnership boundaries, and residual-risk ownership.

Detail

Workflow Governors

Threshold and boundary ownership

Own the boundaries. Define automation scope, risk tiers, thresholds, escalation rights, partnership boundaries, and residual-risk ownership.

- Scope breach: workflow expands beyond approved use case.

- Threshold drift: tolerances are exceeded without a change record.

- Override abuse: override rate exceeds ceiling or lacks justification.

- Regulatory change: a new requirement invalidates the control design.

- Vendor update risk: model update occurs without governance sign-off.

- Control ID or workflow ID.

- Risk tier and decision-rights mapping.

- Threshold register entry with metric and tolerance.

- Residual-risk statement and owner signature.

- Boundary or escalation change record.

GuardrailsIndependent Assurance

Audit-grade verifiability

Prove defensibility. Verify that dialogue is genuine, decisions are reproducible, controls are real, and the partnership produces audit-grade evidence rather than theatre.

Detail

Independent Assurance

Audit-grade verifiability

Prove defensibility. Verify that dialogue is genuine, decisions are reproducible, controls are real, and the partnership produces audit-grade evidence rather than theatre.

- Non-reproducibility: a decision cannot be reconstructed from logs.

- Missing evidence: key sources, rationale, or attribution are absent.

- Control bypass: stop-work authority exists on paper but not in production.

- Sampling failure: false-negative rate is unacceptable in high-risk classes.

- Audit sample set with size and risk weighting.

- Reproducibility test results and failure causes.

- Evidence-pack completeness checklist.

- Partnership verification record.

- Findings and remediation owner.

Behavioral syncRobopsychologists

Trust and attention calibration

Calibrate trust and attention. Detect automation bias, UI-induced errors, over-trust, under-trust, and collaboration breakdowns that quietly train humans to stop thinking.

Detail

Robopsychologists

Trust and attention calibration

Calibrate trust and attention. Detect automation bias, UI-induced errors, over-trust, under-trust, and collaboration breakdowns that quietly train humans to stop thinking.

- Automation-bias spike: humans mirror model outputs at abnormal rates.

- Attention collapse: decision times cluster unnaturally low.

- UI-induced error: an interface cue correlates with misroutes.

- Over-trust pattern: confidence cues drive compliance without evidence.

- Dialogue avoidance: operators stop using the available judgement surface.

- Behavioral-signal deviation.

- Observed failure mode: bias, fatigue, avoidance, or trust miscalibration.

- Proposed mitigation: interface change, training, or threshold adjustment.

- Partnership recalibration record.

- Post-change measurement baseline.

Behavioral syncAINthropologists

Workflow culture mapping

Map the real culture of the workflow. Identify workarounds, incentive distortions, translation fractures, and places where people and systems learn to lie to each other.

Detail

AINthropologists

Workflow culture mapping

Map the real culture of the workflow. Identify workarounds, incentive distortions, translation fractures, and places where people and systems learn to lie to each other.

- Workaround cluster: unofficial procedure becomes the real system.

- Incentive inversion: metrics reward speed over defensibility.

- Translation fracture: recurring misunderstanding across contexts.

- Trust breakdown: stakeholders contest outputs because legitimacy has failed.

- Shadow practice: designed partnership and actual practice diverge.

- Observed practice versus stated procedure.

- Incentive-distortion map.

- Risk translation into safety, ESG, audit, or procurement exposure.

- Practice-versus-design record.

- Workflow redesign tasks.

OutputsOperating deliverables

Open

Operating deliverables

- Two-lane operating map separating model-development labor from live execution-governance labor.

- Role mandates for control room operators, operations leads, workflow governors, assurance, robopsychology, and AINthropology.

- Stop-condition register for provenance breaks, policy mismatches, scope breaches, threshold drift, control bypasses, attention collapse, and workaround clusters.

- Evidence and logging standard covering case IDs, triggers, source checks, dialogue records, restart warrants, residual-risk statements, reproducibility tests, and remediation owners.

- Threshold register and restart-warrant pattern for pausing and safely resuming live workflows.