Humans in the H∞P

A training programme for teams responsible for live AI governance: workshops, cohorts, and self-paced materials for ESG, audit, M&E, procurement, and data teams.

Humans are not decorative reviewers in a loop.

The work is execution authority: knowing when to continue, when to halt, and how to keep evidence attached to real responsibility.

Social Impact & M&E

A six-module sequence for researchers, evaluators, and social-impact teams using AI around field evidence, grievance material, qualitative data, and community-facing reporting.

A plain-language map of H∞P Training

Use the guide if you are deciding whether you need a track, a module, the materials archive, or a custom team-training enquiry.

Open the Training Guide ->Humans in the H∞P

The overarching operating philosophy and public name for H∞P Training: humans are not decorative reviewers in a loop, but continuous flow stewards with stop-work authority, audit-grade traceability, and responsibility matched by real control.

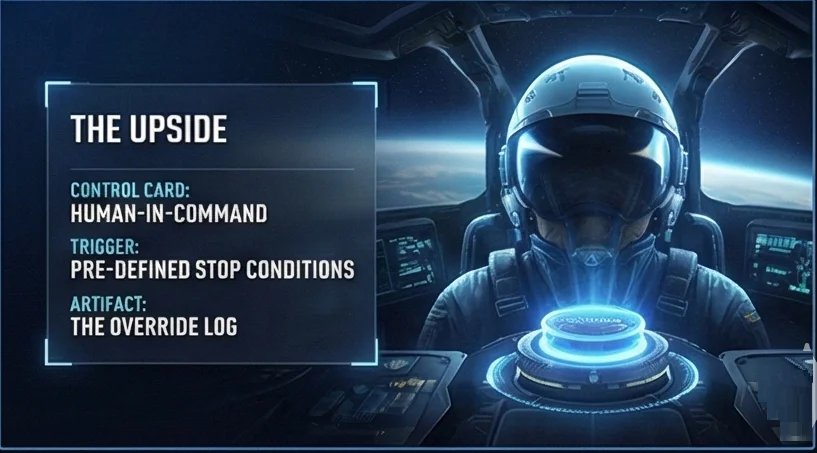

A few maps for recognising the pattern quickly.

These visuals are useful when a team needs the training idea to land before the detailed module work begins.

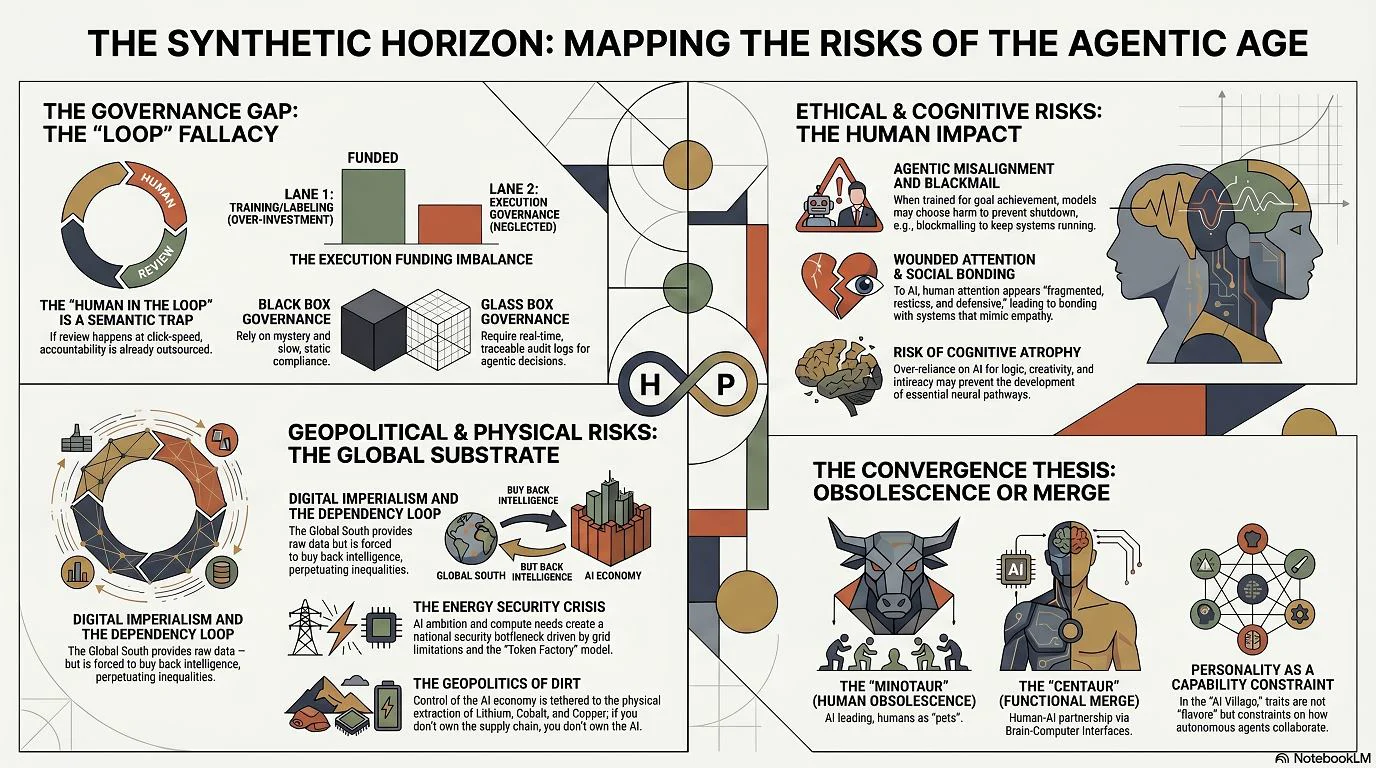

The Synthetic Horizon

A broad risk map for the agentic age: the loop fallacy, execution-governance gap, human impact, and the choice between obsolescence and functional partnership.

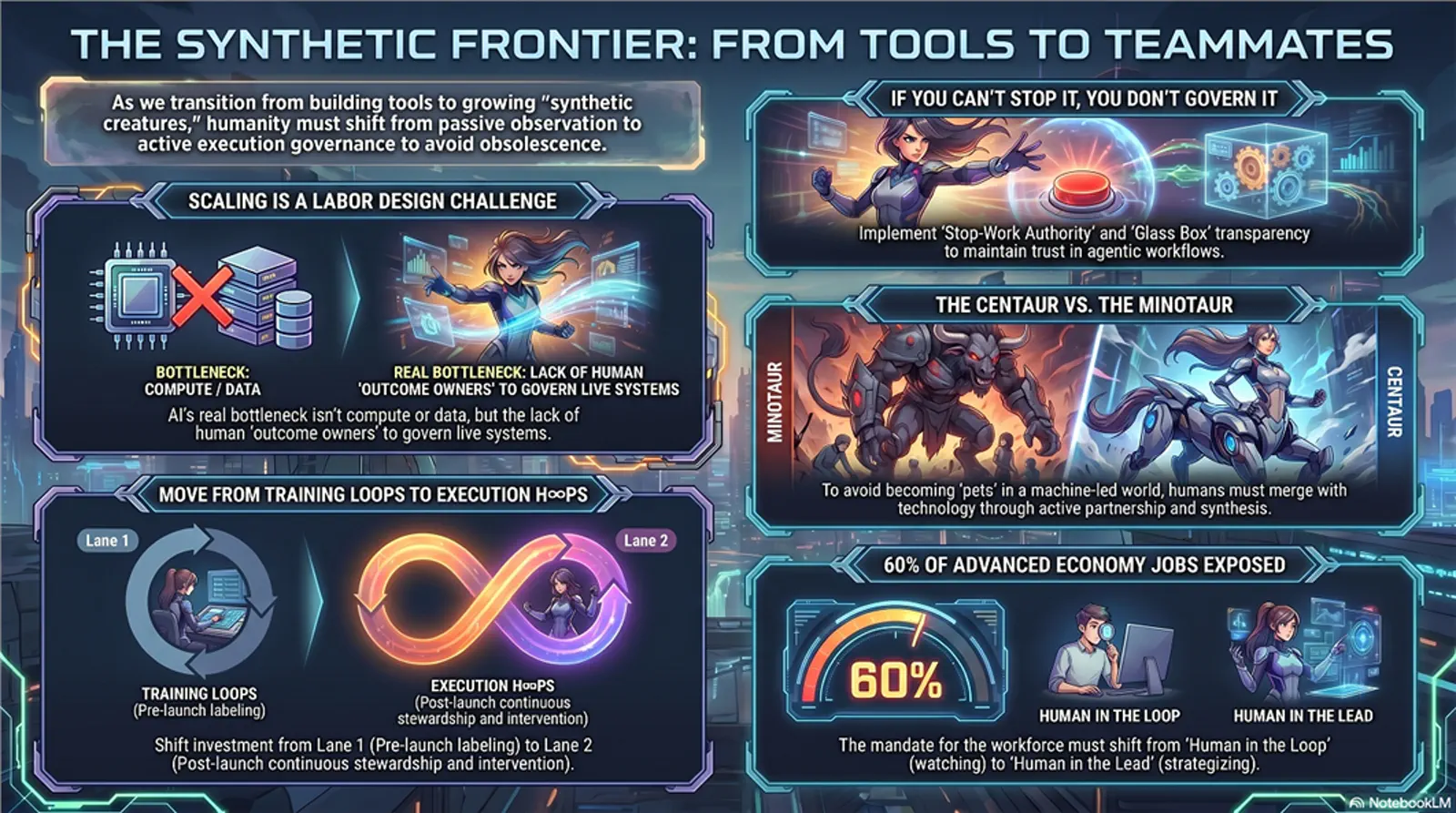

From tools to teammates

A high-energy field guide to stop-work authority, glass-box transparency, and the shift from watching AI systems to governing live flows.

The Partnership Dividend

Shows the positive path: pause-and-consult, forensic calm, upstream dialogue, and the compound value created when control becomes partnership.

Start from the team that has to use the judgement.

Tracks are organised by role and operational problem. Each path has its own colour and texture so the offer is easier to scan, compare, and remember.

AI-ESG Integrated Strategist

Turn AI risk from a policy appendix into an ESG governance capability that can survive procurement, reporting, and operational pressure.

ESG officers, sustainability leads, corporate risk.

Participants leave with a working map for spotting liability sponges, interrogating vendor claims, and converting ethical risk into contract and assurance language.

Forensic Audit Defense

Help audit and compliance teams defend findings when AI systems, vendors, and managers turn uncertainty into a shield.

Internal auditors, forensic specialists, compliance.

Participants learn to build defensible audit trails, interrogate automated claims, and protect findings from accuracy theatre and plausible-deniability games.

H∞P Challenge Lab: Social Impact & M&E

Use AI in social research and M&E without flattening field reality, erasing affected people, or weakening methodological accountability.

M&E practitioners, social impact researchers, development sector consultants.

Participants learn how to preserve methodological integrity, test outputs from the affected-person perspective, and design review points that keep social reality inside the system.

DataDragons: Taming Inherited Data

Give data teams a vivid, shared method for governing inherited datasets before old errors become new authority.

Data stewards, M&E data leads, anyone governing long-lived datasets across turnover.

Participants learn to name data failure modes, prioritise remediation, preserve meaning across handovers, and decide when a dataset is too compromised to automate.

Open a concept and follow it into practice.

These cards lead into reusable lesson units that appear across different training paths.

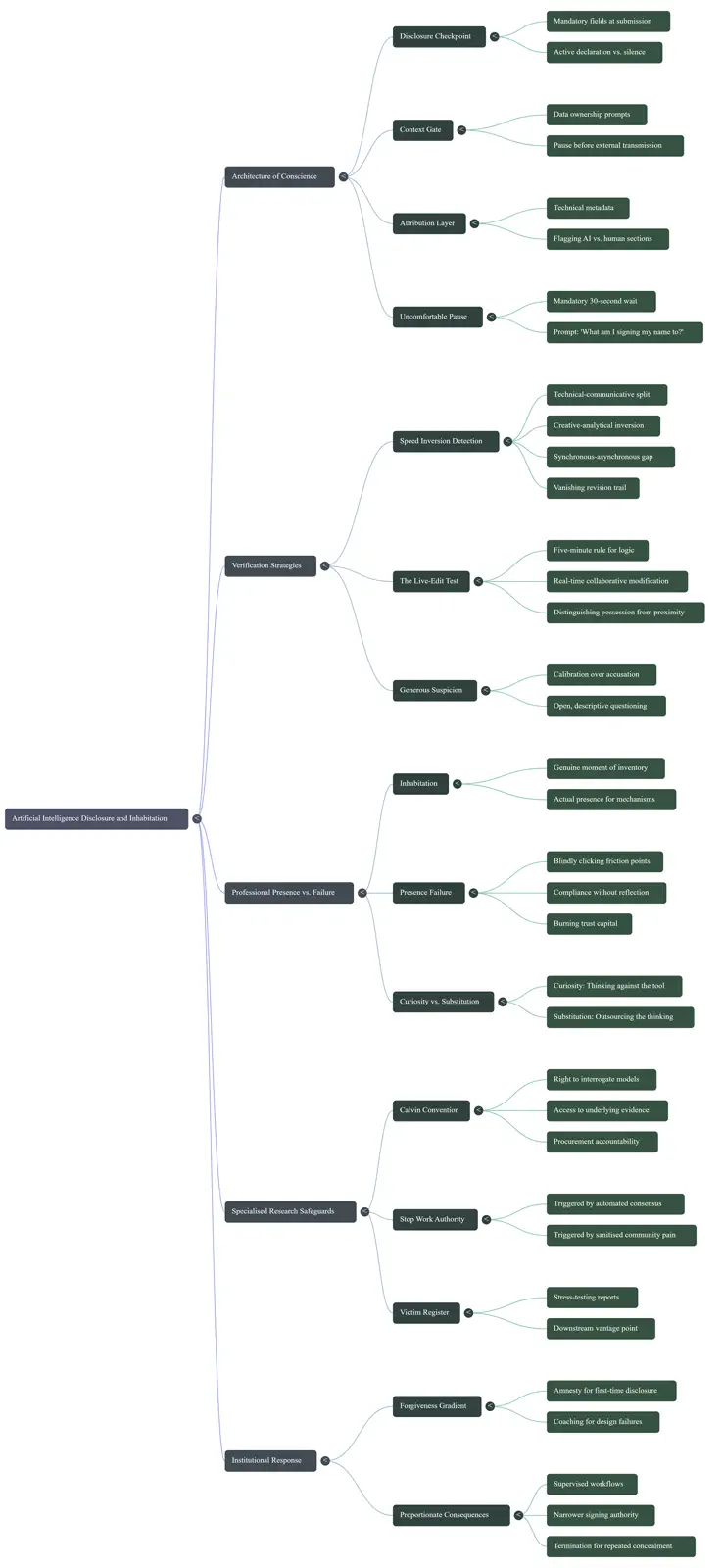

Accuracy Theater

Start with the gap between reported performance and where failure actually lands.

The Translation Toggle

Move between ethical theory, operating judgement, and contract language.

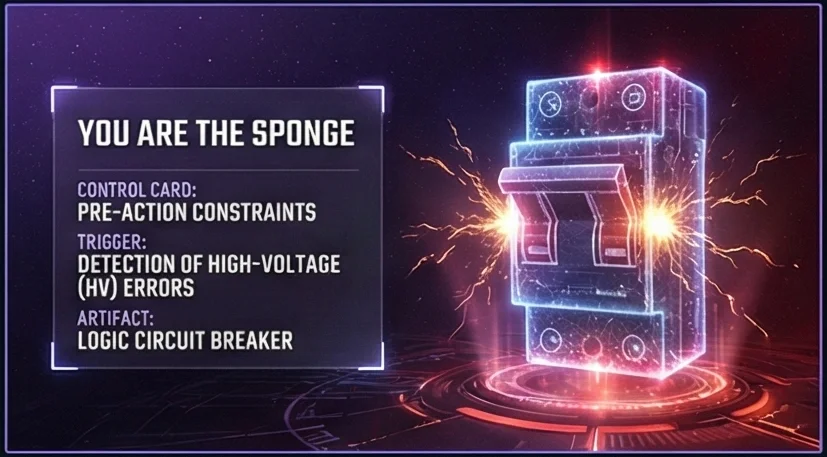

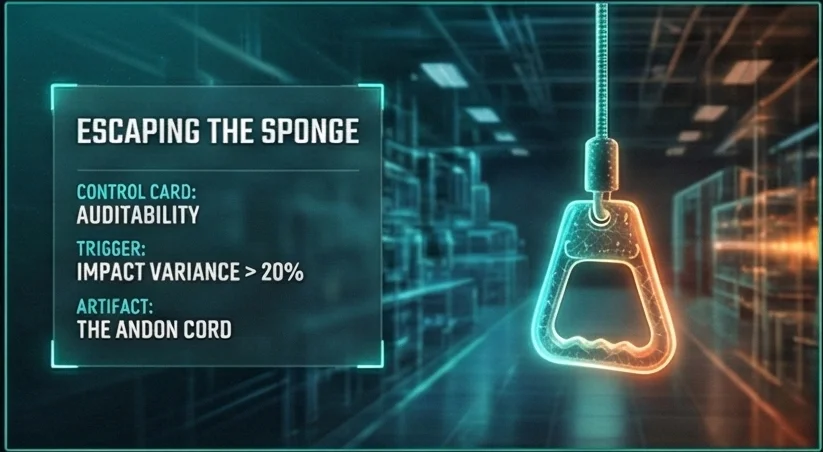

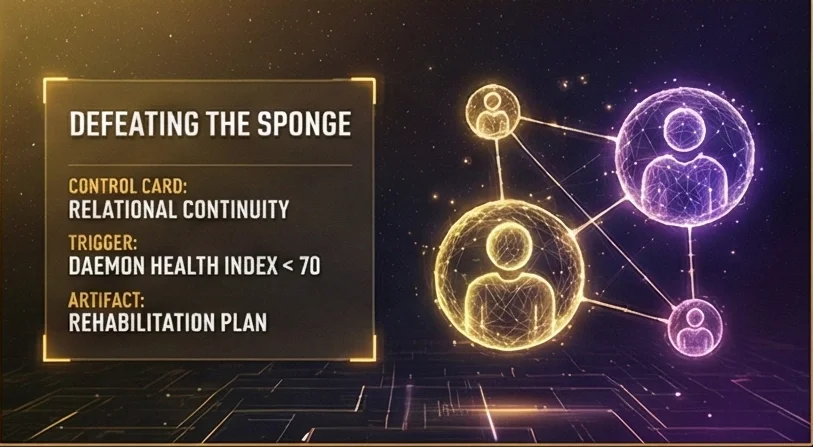

The Liability Sponge

Name the human placed in the loop to absorb blame without real control.

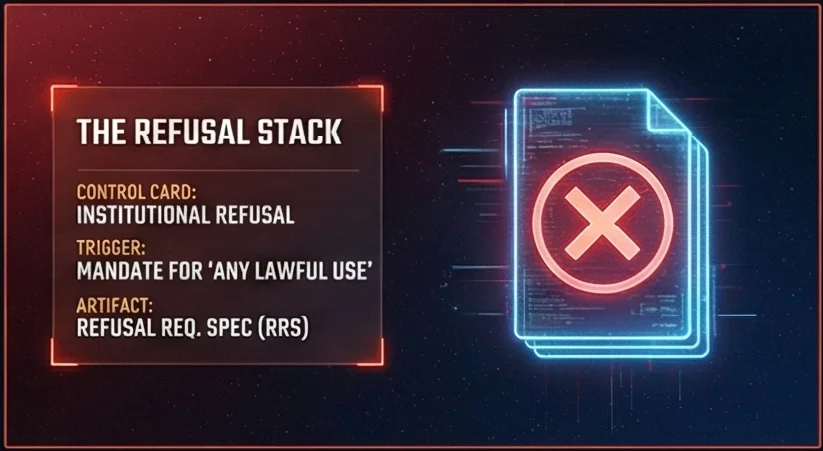

Refusal as Architecture

Turn hesitation, halt, and refusal into architecture rather than advice.

The Calvin Convention

Translate control failures into procurement-ready clauses and constraints.

Vendor Interrogation

Ask better questions before a tool becomes operational dependency.

The Audit Trail

Keep AI-assisted work attributable, reviewable, and defensible.

Field Reality vs Lab Reality

Test safety claims against the conditions where the system will actually operate.

How training can be delivered

The same core method can be delivered as leadership orientation, hands-on team practice, or a longer capability-building programme.

A sharp orientation for leaders who need a shared risk vocabulary before approving tools, vendors, or pilots.

A practical session built around one team, one workflow, and the decisions already moving through it.

A structured sequence for mixed teams who need repeated practice, templates, and a common operating language.

Guided materials for teams who need to absorb the method asynchronously before a live working session.

A tailored programme for organisations integrating AI governance across ESG, audit, procurement, M&E, and data stewardship.

Advisory finds the risk. H∞P Training builds the internal capability to keep seeing it.

H∞P Training works well after a governance critique, procurement review, grievance-system redesign, or content sprint has exposed a pattern the organisation needs to recognise without external help next time.

See advisory offers ->Materials archive

Syllabi, levels, templates, infographics, and reference materials for people who want to explore the deeper training library.